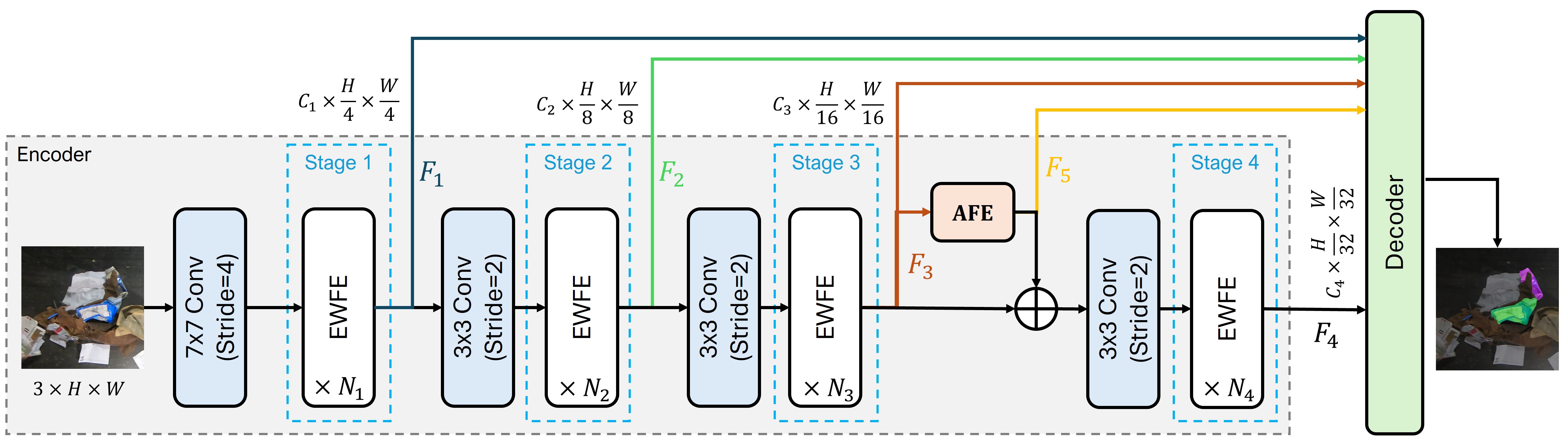

EWSegNet Architecture

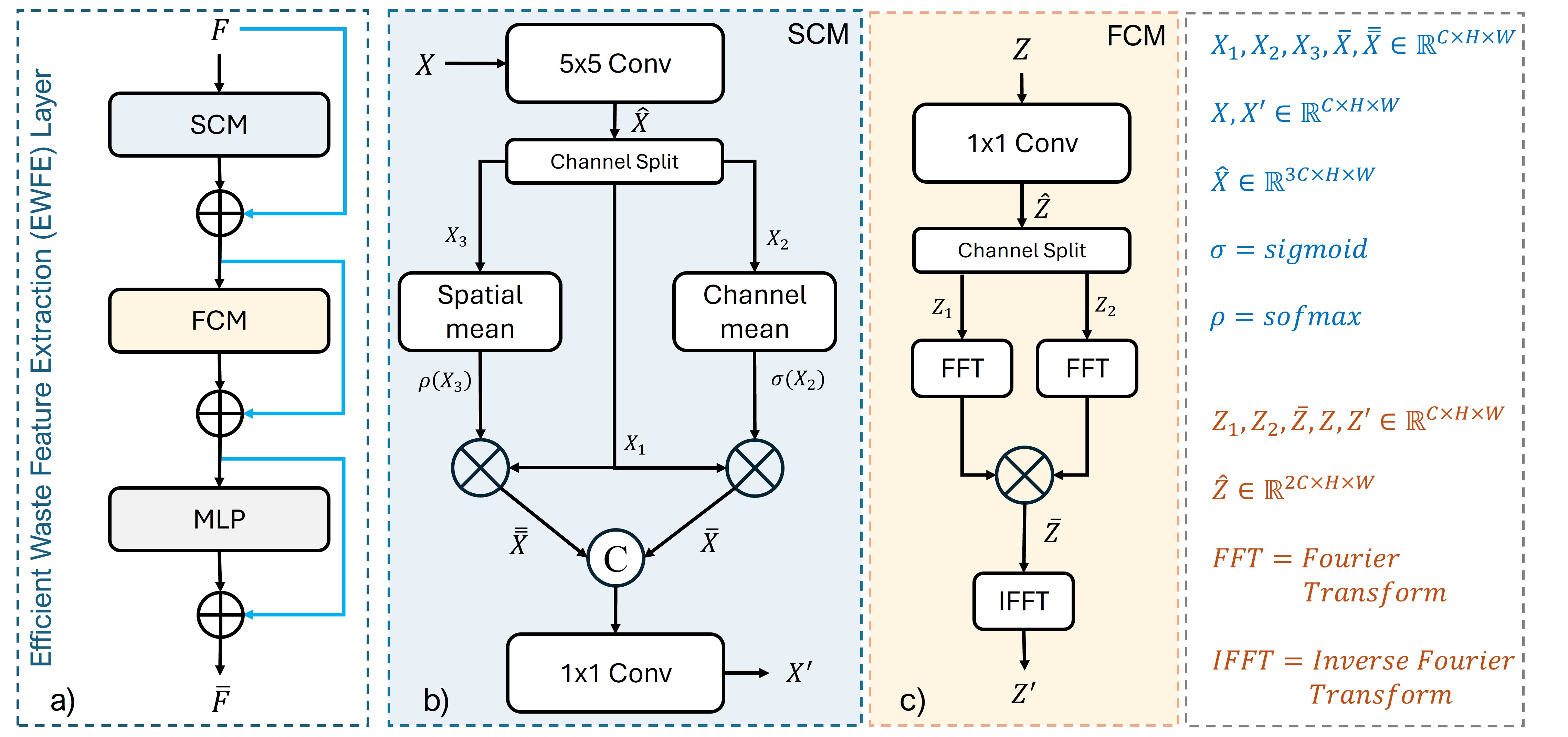

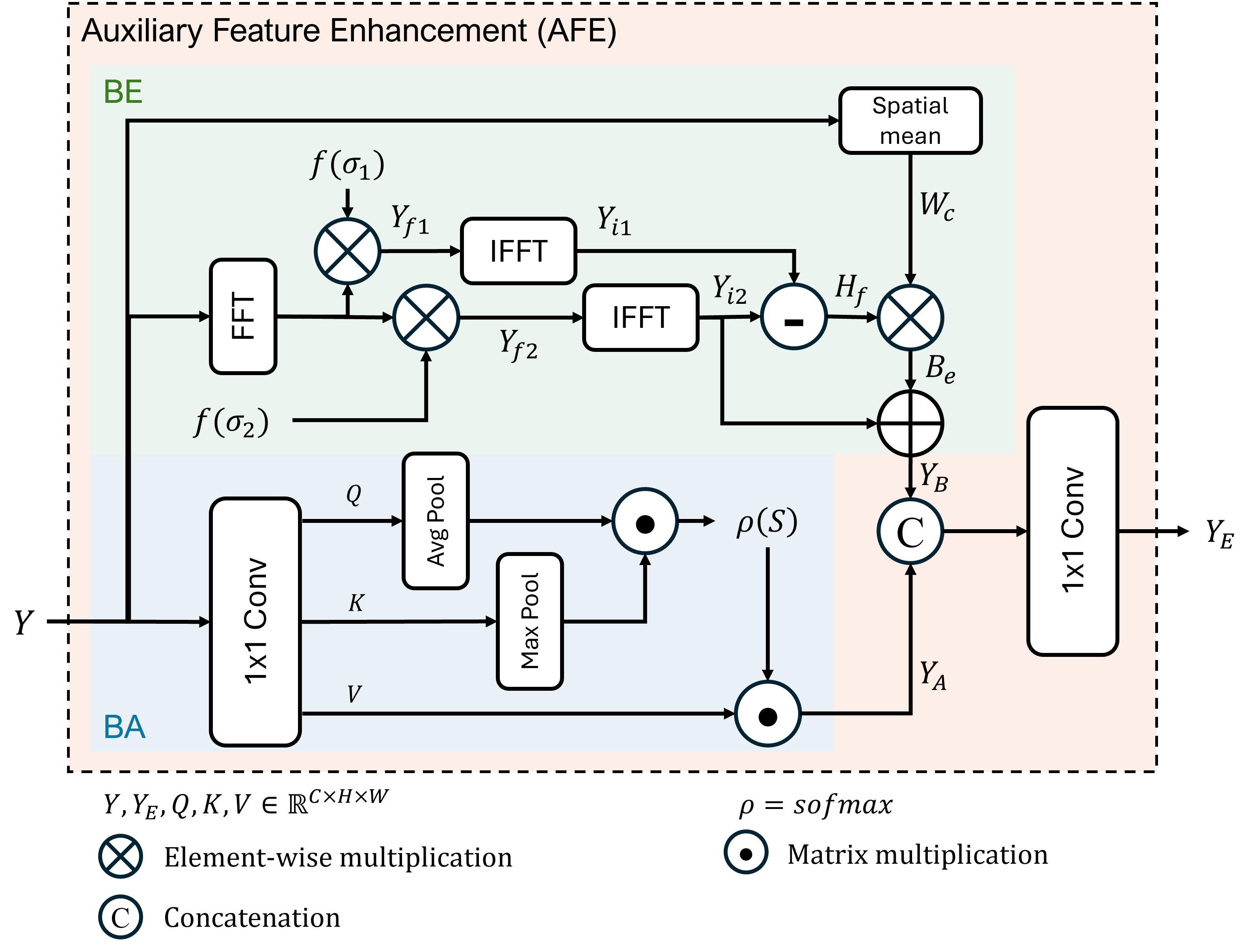

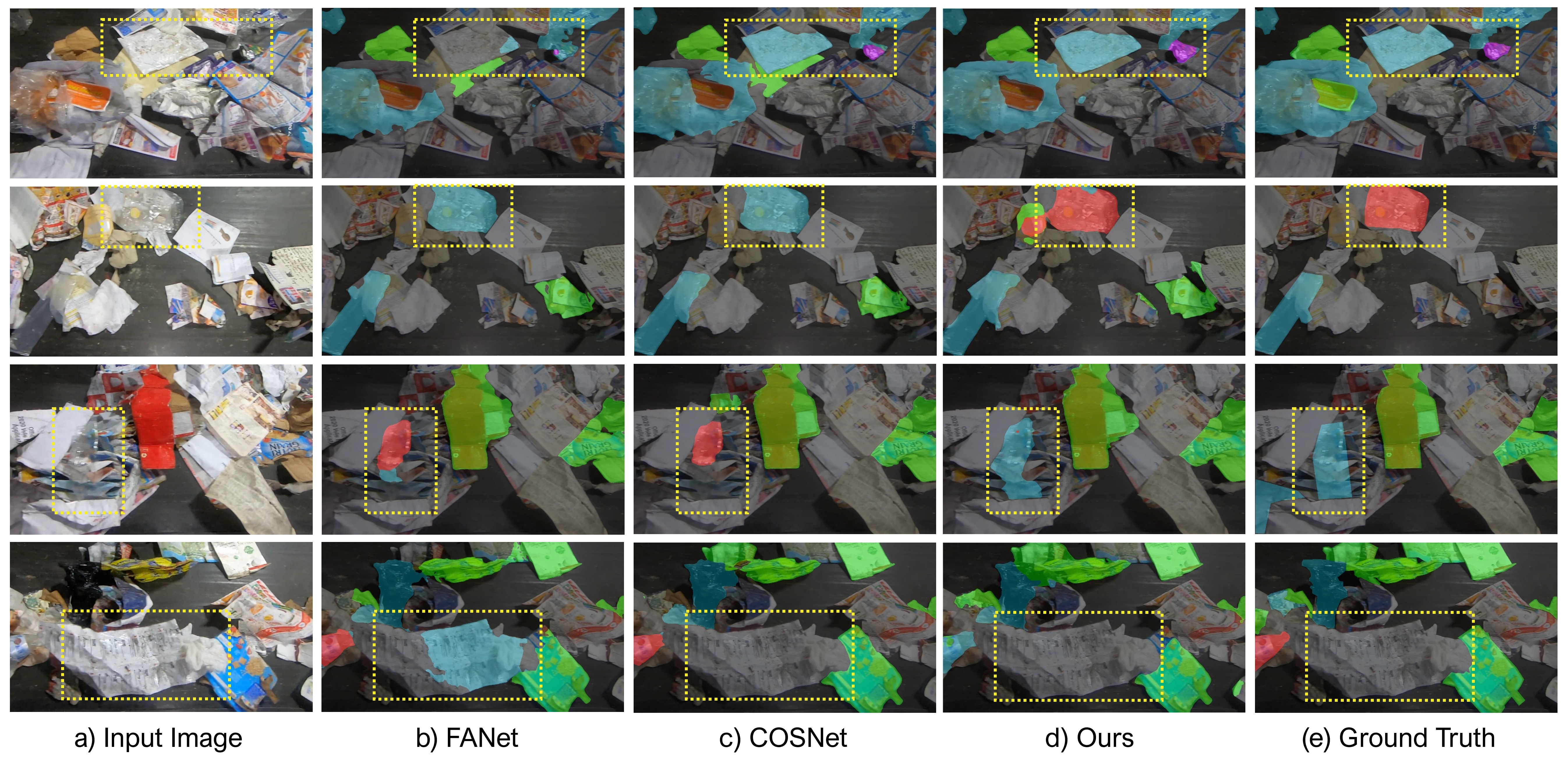

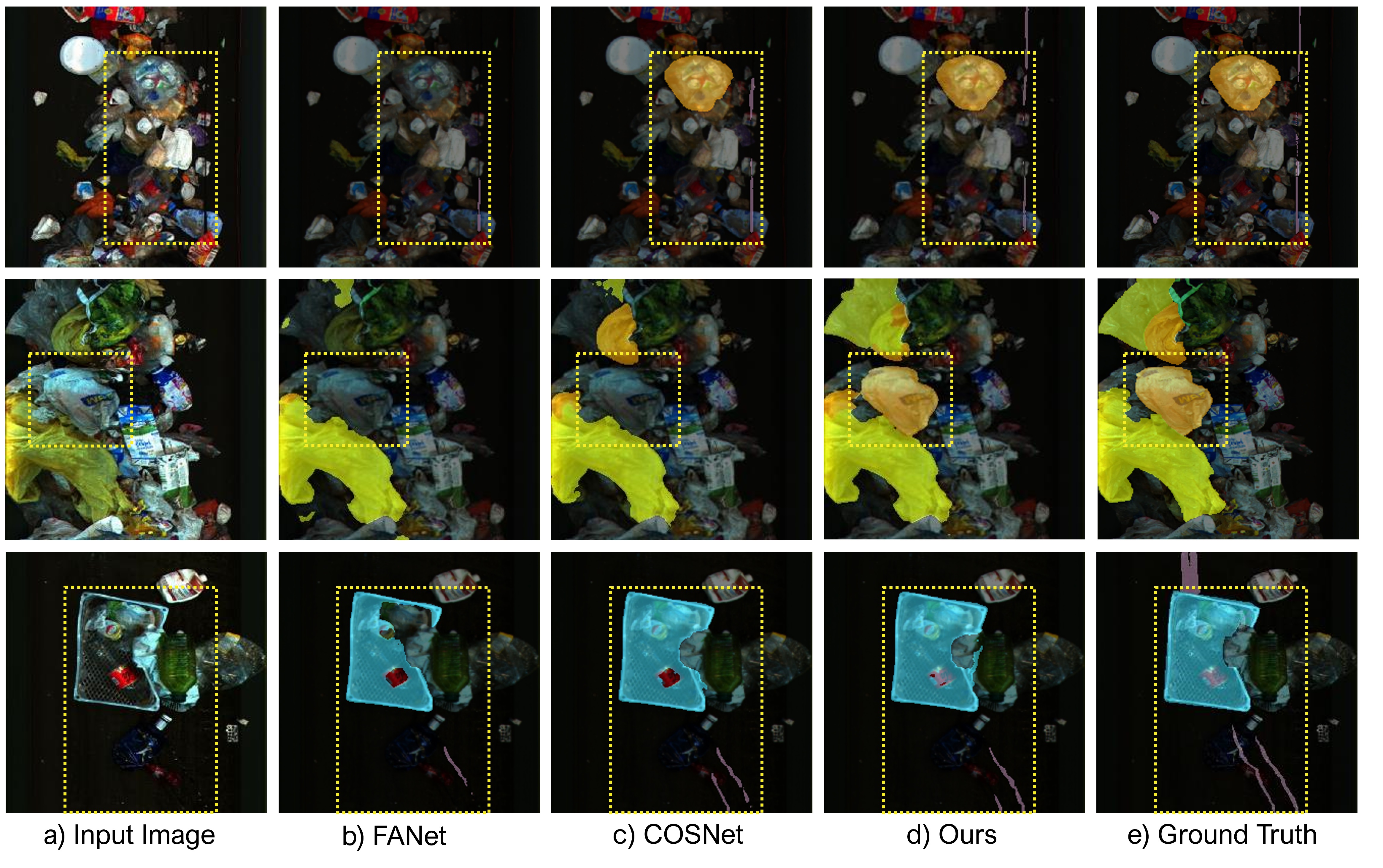

Overall framework of the proposed effective waste segmentation network (EWSegNet). The encoder consists of four stages that provide multiscale feature representations (F1, F2, F3, F4). Each stage (i) contains Ni number of EWFE layers (where i ∈ [1,2,3,4]). Before each stage, a convolution layer is used to downsample the feature maps. Feature representations of stage three are fed to auxiliary feature enhancement (AFE) module to emphasize boundaries and blob regions and obtain feature maps F5. Finally, these multiscale features are fed to the decoder to obtain the segmentation map.